- Microsoft Power Automate Community

- Welcome to the Community!

- News & Announcements

- Get Help with Power Automate

- General Power Automate Discussion

- Using Connectors

- Building Flows

- Using Flows

- Power Automate Desktop

- Process Mining

- AI Builder

- Power Automate Mobile App

- Translation Quality Feedback

- Connector Development

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read Only)

- Power Platform and Dynamics 365 Integrations (Read Only)

- Galleries

- Community Connections & How-To Videos

- Webinars and Video Gallery

- Power Automate Cookbook

- Events

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Community Blog

- Power Automate Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

- Microsoft Power Automate Community

- Galleries

- Power Automate Cookbook

- Re: When an Excel row is created, modified, or del...

Re: When an Excel row is created, modified, or deleted

04-11-2024 10:42 AM - last edited 04-11-2024 10:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When an Excel row is created, modified, or deleted

Template workaround for "When an Excel row is created", "When an Excel row is modified", and/or "When an Excel row is deleted" triggers.

Limitations

• Initially only set up for Excel tables of less than 100,000 records and less than 100MB contents. Version b allows for more than 100,000 records, but still has a 100MB contents limit.

• Each record must be unique. So each record must have a column or combination of columns that is unique & not empty for every single row. In other words, it must include a primary key.

• Every time a new row is added, it must include the primary key column(s) value(s).

• For large tables, the initial set-up may have a 10+ minute delay between the last edits & running whatever actions one adds for the rest of the flow. Version b does include a set-up that is almost twice as fast, but even that will still see a several minute delay after the last edit when the selected Excel file is 10s of thousands of rows.

Example Run

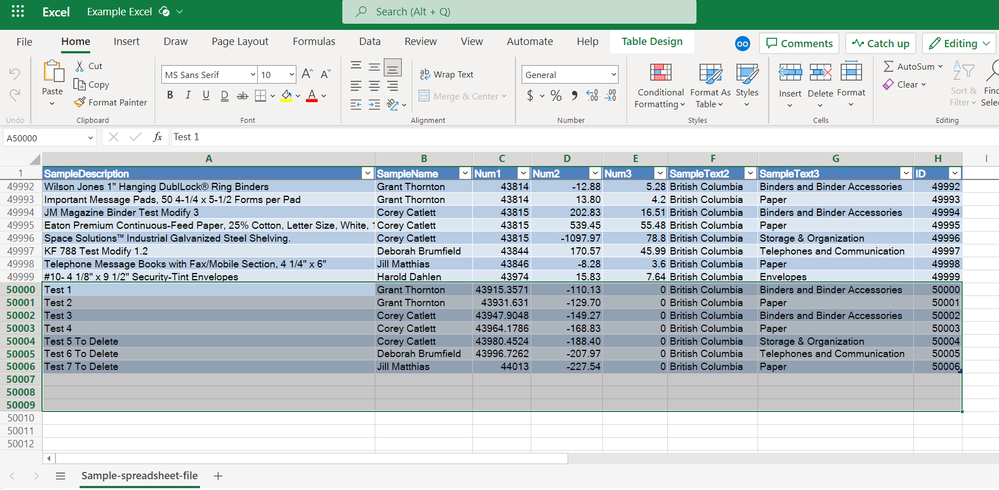

Initial table

Table edits

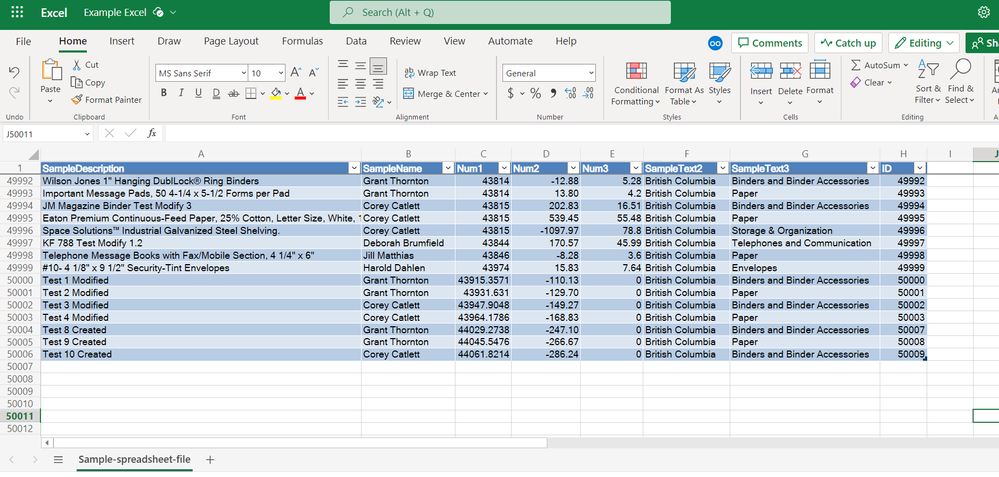

Flow run for the 50,000 record table

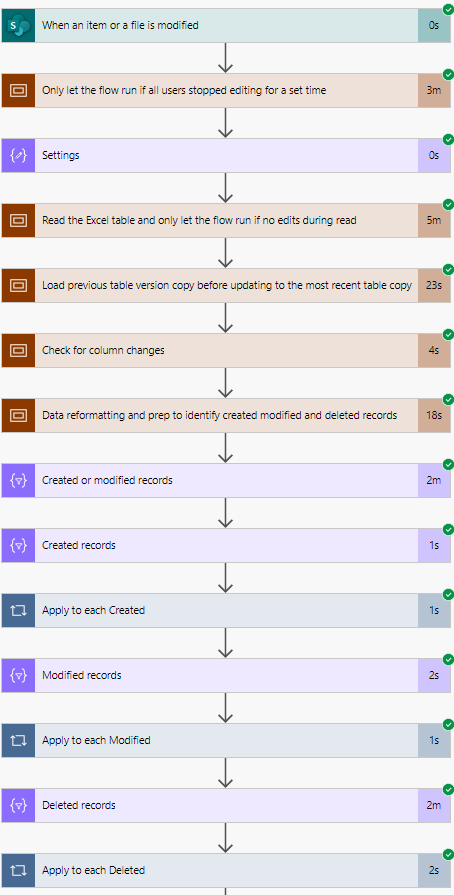

Example email message with HTML tables for each filtered set of records

HTML table styling: https://ryanmaclean365.com/2020/01/29/power-automate-html-table-styling/

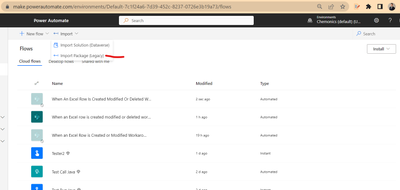

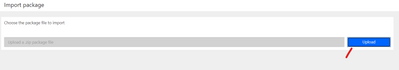

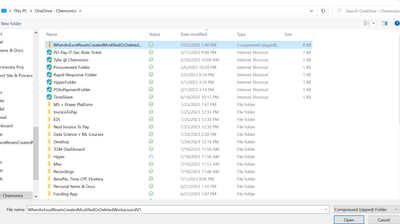

Template Flow Import & Set-Up

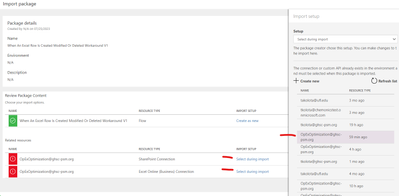

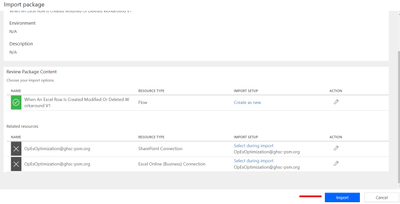

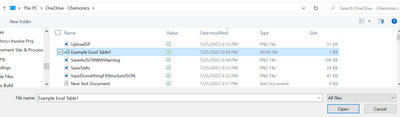

Go to the bottom of the post, download the zip file, go to the page for your flows, and select the legacy import option

Upload the import, change the connections, & select the import button

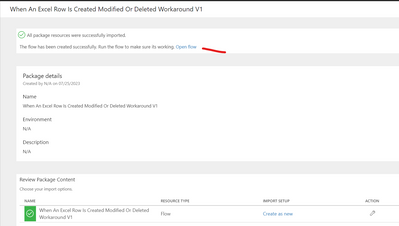

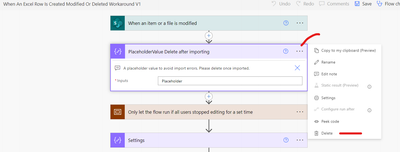

Select the Open Flow link & delete the initial placeholder value compose action

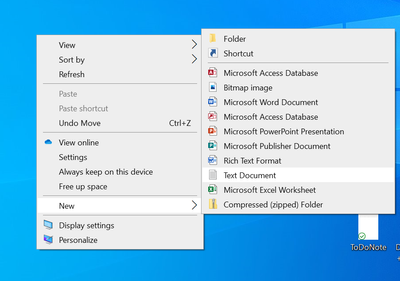

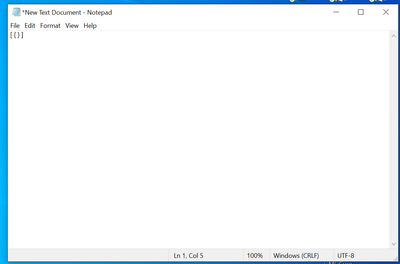

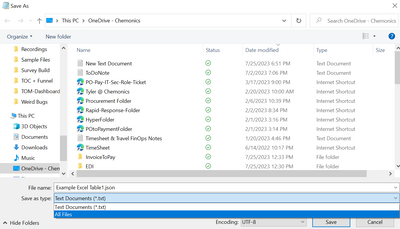

Switch to your desktop, create a text file on your desktop, input something that follows a JSON structure, go to save the file, add a .json file extension while changing the Save as type to All files & preferably give the file a name that refers to the target Excel workbook name & table name

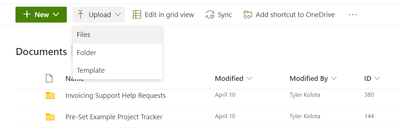

Go to the same SharePoint library as your Excel workbook with the Excel table you are creating the flow for & upload the new JSON file. Make sure it is set up in a place where no one else will delete or alter it.

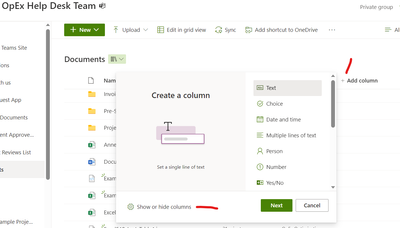

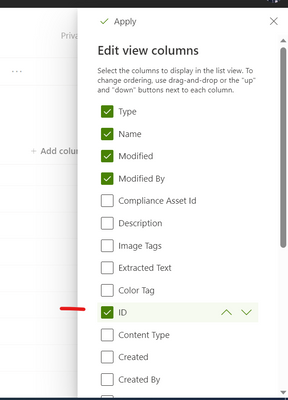

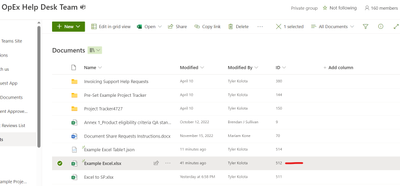

In the list for the SharePoint library select the Add column option, select the Show or hide columns option, then select to show the ID column & Apply the changes. Once the ID column is showing, go to the row with the Excel workbook you are creating this for & copy the file row ID.

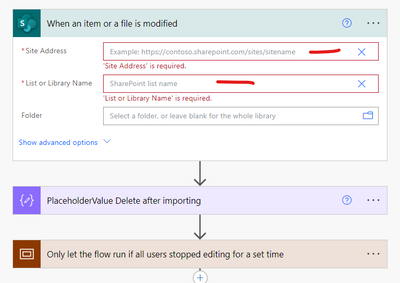

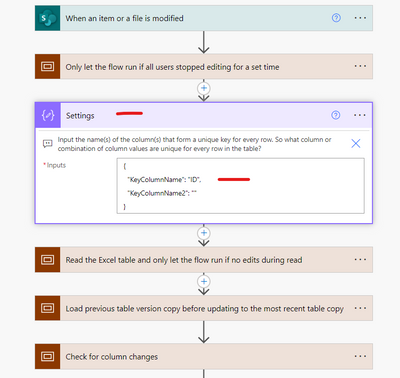

Switch back to the flow. Open the 1st trigger action "When an item or file is modified" & choose the site & document library for your Excel file.

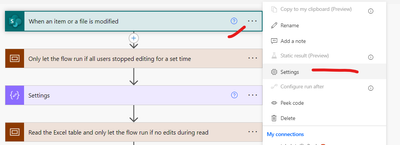

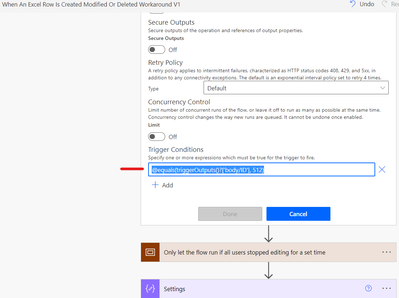

Then go to the 3 dots on the 1st trigger action "When an item or file is modified", go to the Settings, select the +Add button under Trigger Conditions, input the ID copied for the file row into the expression & paste the expression into the Trigger Conditions & select the Done button

@equals(triggerOutputs()?['body/ID'], InsertIdNumberHere)

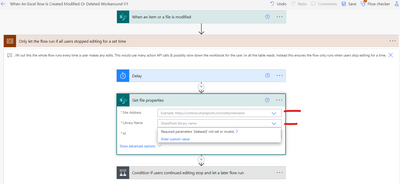

Open the 1st Scope of the flow "Only let the flow run if all users stopped editing for a set time", Open the "Get file properties" action, and select the Site address & SharePoint Library Name for your Excel workbook

Then open the "Settings" action & input the name(s) of the column(s) that form a unique key for every row. So the names for the column or combination of columns with values that are unique for every row in the table

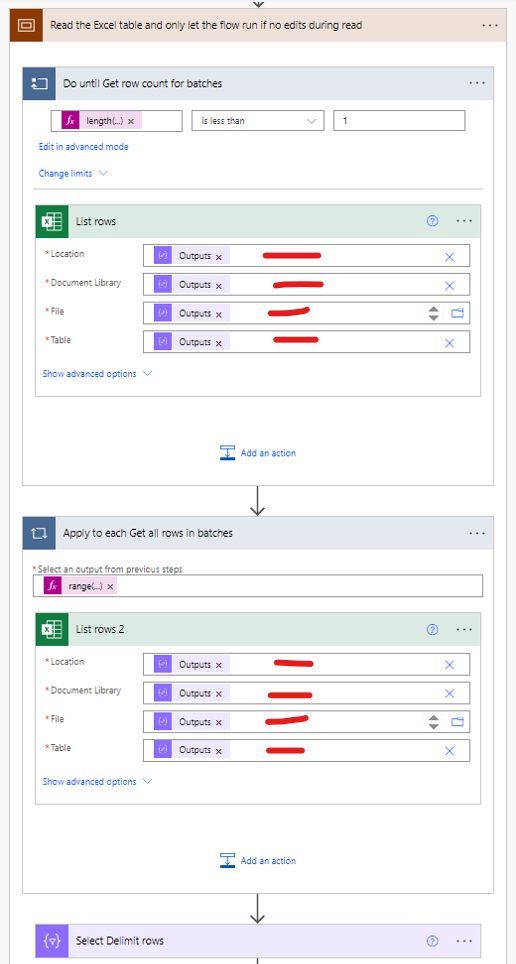

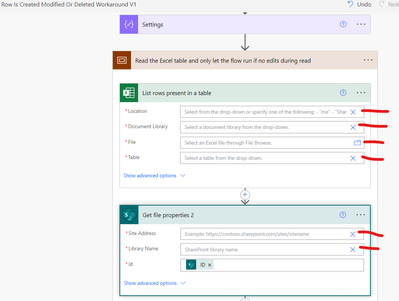

Then go to the Scope below that "Read the Excel table and only let the flow run if no edits during read" and in the Excel List rows & Get file properties 2 actions select the Excel Location, Document Library, File, Table, Site Address, & Library Name

If you are using version b, then you will need to input the Excel file & table references in two places:

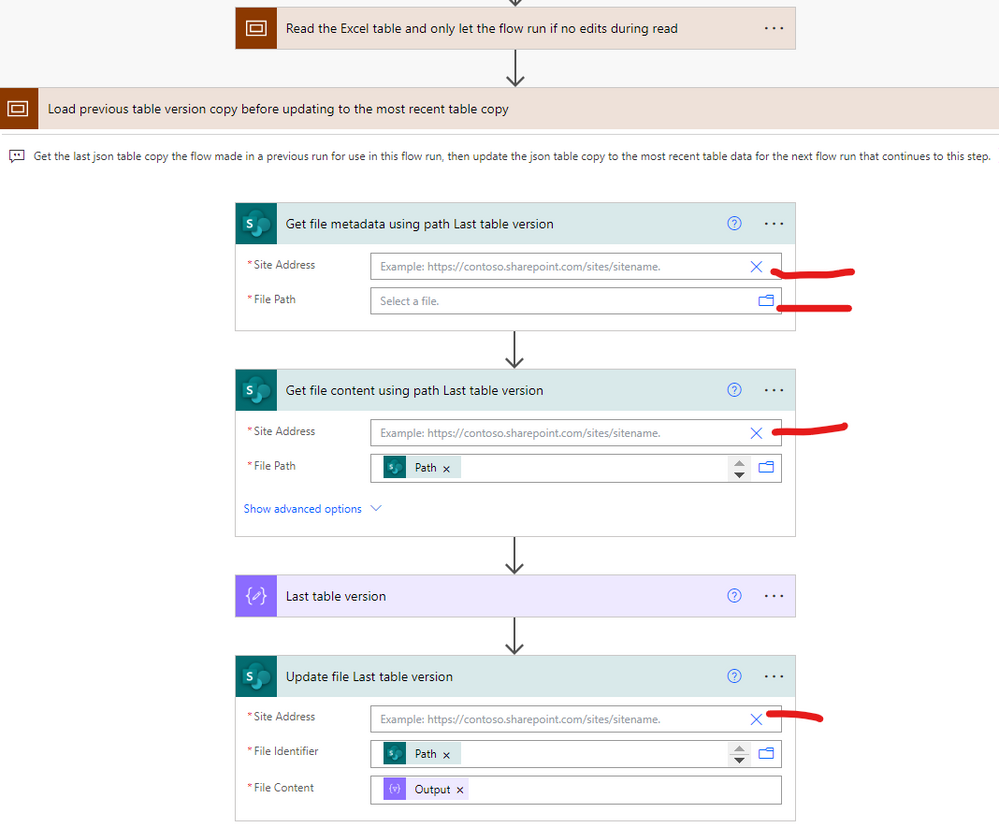

Then go to the next Scope "Load previous table version copy before updating to the most recent table copy" and in the Get file & Update file actions select the Site Address, File Path, Site Address, & Site Address for the JSON file you loaded into the SharePoint library. This will hold a JSON array copy of the Excel table for the flow runs.

Save & run the flow once so any existing data in your Excel table is recorded in the previous table version JSON file. This will prevent any later actions you add from running for every existing row the 1st time the flow is triggered. By running it once without added actions, it will correctly run your added actions for only the edited rows the 1st time it is triggered later.

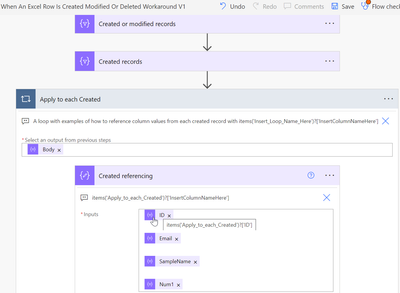

That is all the set-up for the template that will run whenever anything in the Excel workbook is modified and that will output different sets (arrays) of the Created records, Modified records & Deleted records. From here you can use the example Apply to each loops & example compose action inputs to set up what actions you want the flow to run for newly created records, for modified records, and/or for deleted records. In the example Apply to each loops the From field is filled with the array Body of the appropriate filter array action for the Created, Modified, or Deleted records and within the loop you can reference any of the record column values with the provided expression by inputting your chosen Apply to each loop name & inputting your chosen column name.

items('Insert_Loop_Name_Here')?['InsertColumnNameHere']

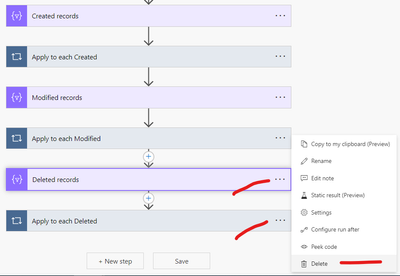

Also if you do not plan to ever use one of the Created, Modified, or Deleted outputs, then you can delete both the filter array action for those records & the associated Apply to each loop

And if you only want the flow actions to run when changes are made to specific columns, you can set the Excel List rows table read to only Select the primary key column & those specific columns to check for changes as described here.

Version B:

Version B replaces the single 100,000 pagination Excel List rows connector with a bit more complicated set-up that determines a rough approximation of the total rows in the table, then loads several 5000 row batches from the table with concurrency/in parallel and then combines all those 5000 row batches into a single JSON array output similar to the output of the standard 100,000 pagination Excel list rows connector.

Reasons to use Version B:

If you need a faster flow with less time between the last Excel table edits & the triggering of flow actions for those edits.

If you want to use this on an Excel table with more than 100,000 rows that is still below 100MB total content.

If you have a lower level Microsoft / Office365 license and only have a maximum pagination / Excel connector load of 5000.

If you have any trouble importing through the standard import method, see this post to import through a solution package.

Thanks for any feedback,

Please subscribe to my YouTube channel (https://youtube.com/@tylerkolota?si=uEGKko1U8D29CJ86).

And reach out on LinkedIn (https://www.linkedin.com/in/kolota/) if you want to hire me to consult or build more custom Microsoft solutions for you.

watch?v=85QAQ-tb1M8

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@takolota genius. Error is below:

Unable to process template language expressions in action 'Last_table_version' inputs at line '0' and column '0': 'The template language function 'json' parameter is not valid. The provided value '[{"Column1":"DATA","Column2":""},{"Column1":"DATA","Column2":""},{"Column1":"DATA","Column2":""}]' cannot be parsed: 'Unexpected character encountered while parsing value: . Path '', line 0, position 0.'. Please see https://aka.ms/logicexpressions#json for usage details.'.

The expression that is currently in the step for Last table version is:

json(base64ToString(outputs('Get_file_content_using_path_Last_table_version')?['body']?['$content']))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@takolota With 1_0_0_2 version there were 3 dependencies showed. but with 1_0_0_3 its reduced to 2 missing dependencies now.

Can we include the other 2 dependencies as well?

[{"SolutionValidationResultType":"Error","Message":"The following solution cannot be imported: WhenanExcelrowiscreatedmodifiedordeleted. Some dependencies are missing. The missing dependencies are : <MissingDependencies><MissingDependency><Required type=\"connectionreference\" displayName=\"new_sharedoffice365_d394c\" solution=\"Active\" id.connectionreferencelogicalname=\"new_sharedoffice365_d394c\" /><Dependent type=\"29\" displayName=\"When An Excel Row Is Created Modified Or Deleted V1.1\" id=\"{ca4323f0-8072-ee11-9ae7-000d3a11481f}\" /></MissingDependency><MissingDependency><Required type=\"connectionreference\" displayName=\"new_sharedoffice365_dc3bc\" solution=\"Active\" id.connectionreferencelogicalname=\"new_sharedoffice365_dc3bc\" /><Dependent type=\"29\" displayName=\"When An Excel Row Is Created Modified Or Deleted V1.1OneDrive\" id=\"{63633778-cef5-ee11-a1fd-000d3a8d1f5f}\" /></MissingDependency></MissingDependencies> , ProductUpdatesOnly : False","ErrorCode":-2147188707,"AdditionalInfo":null}]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Strange, so it is saying it encounters an unexpected character for the very 1st character of the string.

But what you have typed there all looks like a normal json array without any irregular characters.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think the last two were related to email actions so I just removed the email actions entirely.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After many hours of troubleshooting, I found this solution which temporarily resolved the issue:

c# - Unexpected character encountered while parsing value - Stack Overflow

I confirmed that the file is indeed being saved as UTF-8 BOM by the flow. If I open the TableVersionJSON.JSON and convert it back to UTF-8 it runs perfectly, then on the next run fails because something in the save action is saving it in UTF-8 BOM. I need to run this type of template on two separate excel files of the exact identical format, identical columns and headers, with different data in the values. I created two identifcal PowerAutomate files that only have differences in the file locations. The other file/flow works beautifully and does not have this issue. I am trying to figure out what could possibly be in the values of the excel sheet causing this issue, but I am stuck. I have tried deleting the flow with errors, copying over the flow that works, updating references, and it still fails. I will keep troubleshooting and report back if I find anything, but any input or direction would be greatly appreciated as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@takolota I was able to resolve the issue by not storing the previous table data (TableVersiuonJSON) as a JSON file but rather a CSV, then bringing back in/out of JSON format where needed. A lot of extra steps, but it is working. I would love to still learn if there is a cleaner solution to stop the JSON file from converting to UTF-8 BOM format, but this bandaid does work.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@takolota Really Appreciate your continuous efforts and conversation. Yep, I'm able to proceed to next screen. but weird thing is, though I have selected account to sign in and tick mark is enabled, the import button is not enabled. Am I missing anything here?

Post to that I switched to classic mode to see whether I can get more info and found these

System.ServiceModel.FaultException`1[Microsoft.Xrm.Sdk.OrganizationServiceFault]: SecLib::CheckPrivilege failed. User: USER_GUID_RePLACED, PrivilegeName: prvWriteEntity, PrivilegeId: 2a4e96d7-7bf9-4578-9d4e-1e494c33c1bf, Required Depth: Basic, BusinessUnitId: BUSINESS_UNIT_ID_RePLACED, MetadataCache Privileges Count: 4284, User Privileges Count: 1146 (Fault Detail is equal to Exception details:

ErrorCode: 0x80040220

Any idea what privilege and where it needs to be configured?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is a legacy import package for the Power Automate menu...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@bommannanr I said it is a legacy import. You must go to the Power Automate page & select import there.

It is not a solution package.